Cloud Computing Patterns, Mechanisms > Mechanisms > A - B > Automated Scaling Listener

Automated Scaling Listener

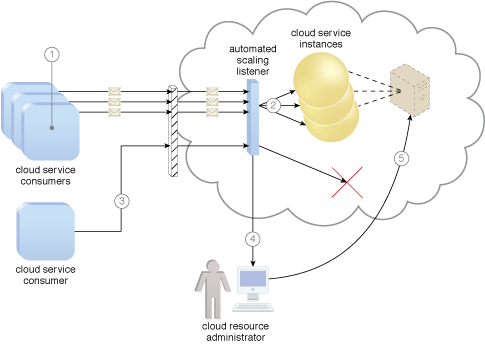

The automated scaling listener mechanism is a service agent that monitors and tracks communications between cloud service consumers and cloud services for dynamic scaling purposes. Automated scaling listeners are deployed within the cloud, typically near the firewall, from where they automatically track workload status information.

Workloads can be determined by the volume of cloud consumer-generated requests or via back-end processing demands triggered by certain types of requests. For example, a small amount of incoming data can result in a large amount of processing.

Automated scaling listeners can provide different types of responses to workload fluctuation conditions, such as:

- Automatically scaling IT resources out or in based on parameters previously defined by the cloud consumer (commonly referred to as auto-scaling).

- Automatic notification of the cloud consumer when workloads exceed current thresholds or fall below allocated resources. This way, the cloud consumer can choose to adjust its current IT resource allocation.

Different cloud provider vendors have different names for service agents that act as automated scaling listeners.

Three cloud service consumers attempt to access one cloud service simultaneously (1). The automated scaling listener scales out and initiates the creation of three redundant instances of the service (2). A fourth cloud service consumer attempts to use the cloud service (3). Programmed to allow up to only three instances of the cloud service, the automated scaling listener rejects the fourth attempt and notifies the cloud consumer that the requested workload limit has been exceeded (4). The cloud consumer’s cloud resource administrator accesses the remote administration environment to adjust the provisioning setup and increase the redundant instance limit (5).

Related Patterns:

- Automated Administration

- Cross-Storage Device Vertical Tiering

- Dynamic Scalability

- Elastic Network Capacity

- Elastic Resource Capacity

- Intra-Storage Device Vertical Data Tiering

- Load Balanced Virtual Server Instances

- Logical Pod Container

- Serverless Deployment

- Storage Workload Management

- Sub-LUN Tiering

- Usage Monitoring

- Volatile Configuration

This mechanism is covered in CCP Module 2: Cloud Technology Concepts.

For more information regarding the Cloud Certified Professional (CCP) curriculum, visit www.arcitura.com/ccp.

This cloud computing mechanism is covered in:

Cloud Computing: Concepts, Technology & Architecture by Thomas Erl, Zaigham Mahmood,

Ricardo Puttini

(ISBN: 9780133387520, Hardcover, 260+ Illustrations, 528 pages)

For more information about this book, visit www.arcitura.com/books.