Cloud Computing Patterns, Mechanisms > Sharing, Scaling and Elasticity Patterns > Service Load Balancing

Service Load Balancing (Erl, Naserpour)

How can a cloud service accommodate increasing workloads?

Problem

A single cloud service implementation has a finite capacity, which leads to runtime exceptions, failure and performance degradation when its processing thresholds are exceeded.

Solution

Redundant deployments of the cloud service are created and a load balancing system is added to dynamically distribute workloads across cloud service implementations.

Application

The duplicate cloud service implementations are organized into a resource pool. The load balancer is positioned as an external component or may be built-in, allowing hosting servers to balance workloads among themselves.

Mechanisms

Cloud Usage Monitor, Load Balancer, Resource Cluster, Resource Replication

Compound Patterns

Burst In, Burst Out to Private Cloud, Burst Out to Public Cloud, Cloud Authentication, Cloud Balancing, Elastic Environment, Infrastructure-as-a-Service (IaaS), Isolated Trust Boundary, Multitenant Environment, Platform-as-a-Service (PaaS), Private Cloud, Public Cloud, Resilient Environment, Resource Workload Management, Secure Burst Out to Private Cloud/Public Cloud, Software-as-a-Service (SaaS)

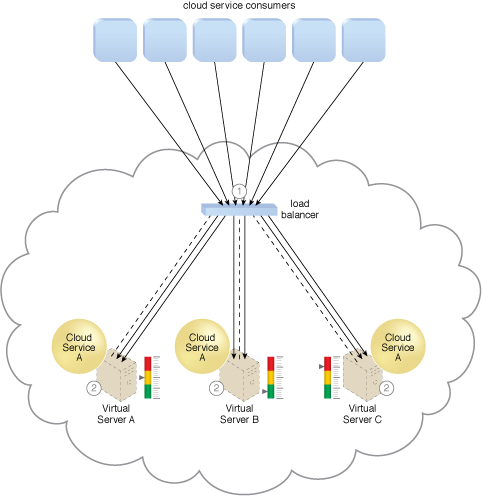

The load balancing agent intercepts messages sent by cloud service consumers (1) and forwards the messages at runtime to the virtual servers so that the workload processing is horizontally scaled (2).

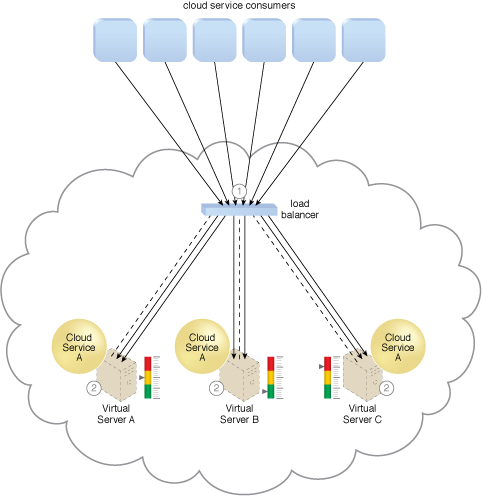

Cloud consumer requests are sent to Cloud Service A on Virtual Server A (1). The cloud service implementation includes built-in load balancing logic that is capable of distributing requests to the neighboring Cloud Service A implementations on Virtual Servers B and C (2).

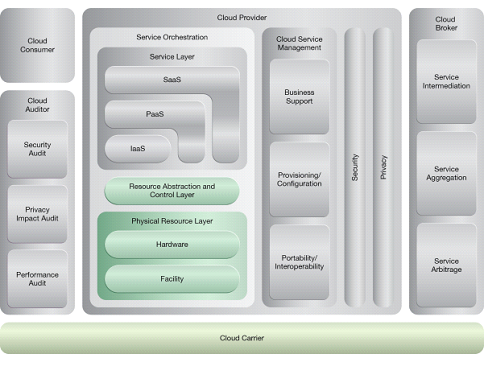

NIST Reference Architecture Mapping

This pattern relates to the highlighted parts of the NIST reference architecture, as follows:

This pattern is covered in CCP Module 5: Advanced Cloud Architecture.

For more information regarding the Cloud Certified Professional (CCP) curriculum, visit www.arcitura.com/ccp.

This cloud computing mechanism is covered in:

Cloud Computing: Concepts, Technology & Architecture by Thomas Erl, Zaigham Mahmood,

Ricardo Puttini

(ISBN: 9780133387520, Hardcover, 260+ Illustrations, 528 pages)

For more information about this book, visit www.arcitura.com/books.