Machine Learning Patterns, Mechanisms > Model Optimization Patterns > Lightweight Model Implementation

Lightweight Model Implementation (Khattak)

How can prediction latency be kept to a minimum in a realtime data processing system while guaranteeing acceptable accuracy?

Problem

A complex model with high accuracy generally incurs high prediction latency when deployed in realtime systems, leading to degraded system performance with the further consequences of potential business loss.

Solution

A lightweight model with acceptable accuracy is trained and deployed to make realtime predictions.

Application

A statistical or a simple machine learning algorithm, such as Naïve Bayes, is used to train a predictive model that is then deployed in the realtime system to make low latency predictions.

Mechanisms

Query Engine, Analytics Engine, Processing Engine, Resource Manager, Storage Device, Data Transfer Engine

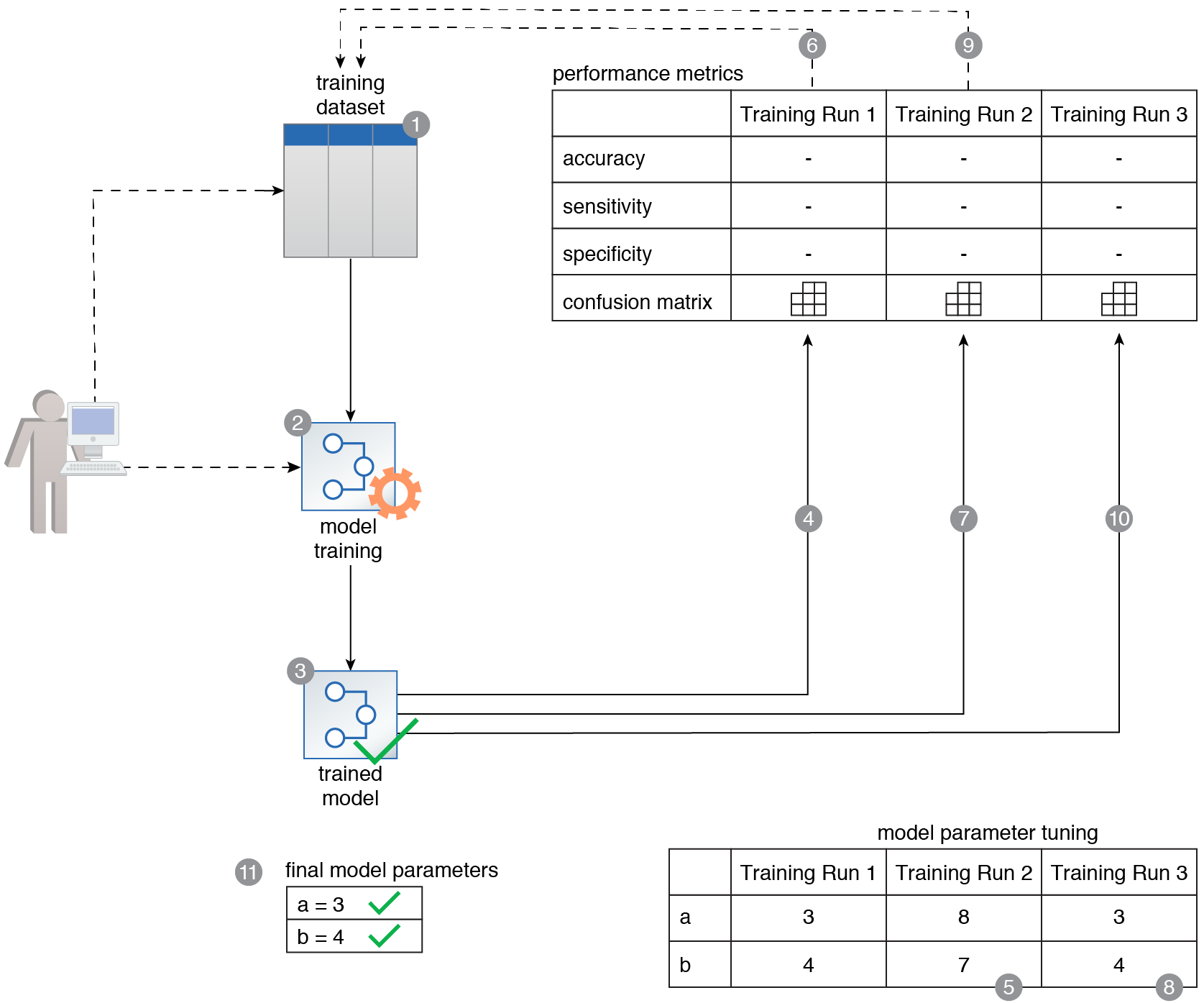

A training dataset is prepared (1). It is then used to train a model using a lightweight algorithm (2). This results in a lightweight model (3). The model is then deployed to a realtime prediction system (4). Streaming data enters the realtime prediction system at time t0 (5). At time t1, the realtime prediction system makes the first prediction related to the first data point, and at time t2 the realtime prediction system makes the second prediction, and so on (6). The latency that occurs as a result of making predictions is minimal (7).

This pattern is covered in Machine Learning Module 2: Advanced Machine Learning.

For more information regarding the Machine Learning Specialist curriculum, visit www.arcitura.com/machinelearning.