SOA Patterns > Basics > Service-Orientation Principles > Service-Orientation in the Real World > Life Before Service-Orientation

Life Before Service-Orientation

Note: It is fully acknowledged that past design paradigms have advocated similar principles and strategic goals as service-orientation. Several of these design approaches, in fact, directly inspired or influenced service-orientation (as explained on the Origins and Influences of Service-Orientation page). The following discussion is focused specifically on the silo-based design approach because it has persisted as the most common means by which applications are delivered.

To best appreciate why service-orientation has emerged and how it is intended to improve the design of automation systems, we need to compare before and after perspectives. By studying some of the common issues that have historically plagued IT we can begin to understand the solutions proposed by this design paradigm.

In the world of business it makes a great deal of sense to deliver solutions capable of automating the execution of business tasks. Over the course of IT’s history the majority of such solutions have been created with a common approach of identifying the business tasks to be automated, defining their business requirements, and then building the corresponding solution logic.

Figure 1 – A ratio of one application for each new set of automation requirements has been common.

This has been an accepted and proven approach to achieving tangible business benefits through the use of technology and has been successful at providing a relatively predictable return on investment.

Figure 2 – A sample formula for calculating ROI is based on a predetermined investment with a predictable return.

The ability to gain any further value from these applications is usually inhibited because their capabilities are tied to specific business requirements and processes (some of which will even have a limited lifespan). When new requirements and processes come our way we are forced to either make significant changes to what we already have or build a new application altogether.

In the latter case, although repeatedly building “disposable applications” is not the perfect approach, it has proven itself as a legitimate means of automating business. Let’s explore some of the lessons learned by first focusing on the positive.

- Solutions can be built efficiently because they only need to be concerned with the fulfillment of a narrow set of requirements associated with a limited set of business processes.

- The business analysis effort involved with defining the process to be automated is straight forward. Analysts are focused only on one process at a time and therefore only concern themselves with the business entities and domains associated with that one process.

- Solution designs are tactically focused. Although complex and sophisticated automation solutions are sometimes required, the sole purpose of each is to automate just one or a specific set of business processes. This predefined functional scope simplifies the overall solution design as well as the underlying application architecture.

- The project delivery lifecycle for each solution is streamlined and relatively predictable. Although IT projects are notorious for being complex endeavors, riddled with unforeseen challenges, when the delivery scope is well-defined (and doesn’t change), the process and execution of the delivery phases have a good chance of being carried out as expected.

- Building new systems from the ground up allows organizations to take advantage of the latest technology advancements. The IT marketplace progresses every year to the extent that we fully expect technology we use to build solution logic today to be different and better tomorrow. As a result, organizations that repeatedly build disposable applications can leverage the latest technology innovations with each new project.

The aforementioned characteristics of traditional solution delivery provide a good indication as to why this approach has been so popular. Despite its acceptance, though, it has become evident that there is still lots of room for improvement.

It Can be Highly Wasteful

The creation of new solution logic in a given enterprise commonly results in a significant amount of redundant functionality. The effort and expense required to construct this logic is therefore also redundant.

It’s Not as Efficient as it Appears

Because of the tactical focus on delivering solutions for specific process requirements, the scope of development projects is highly targeted. Therefore, there is the constant perception that business requirements will be fulfilled at the earliest possible time. However, by continually building and rebuilding logic that already exists elsewhere, the process is not as efficient as it could be if the creation of redundant logic could be avoided.

It Bloats an Enterprise

Each new or extended application adds to the bulk of an IT environment’s system inventory. The ever-expanding hosting, maintenance, and administration demands can inflate an IT department in budget, resources, and size to the extent that IT becomes a significant drain on the overall organization.

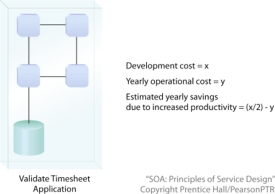

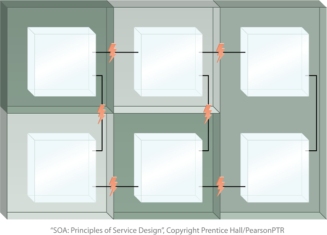

Figure 3 – This simple diagram portrays an enterprise environment containing applications with redundant functionality. The net effect is a larger enterprise.

It Can Result in Complex Infrastructures and Convoluted Enterprise Architectures

Having to host numerous applications built from different generations of technologies and perhaps even different technology platforms often requires that each will impose unique architectural requirements. The disparity across these “siloed” applications can lead to a counter-federated environment, making it challenging to plan the evolution of an enterprise and scale its infrastructure in response to that evolution.

Figure 4 – Different application environments within the same enterprise can introduce incompatible runtime platforms as indicated by the shaded zones.

Integration Becomes a Constant Challenge.

Applications built only with the automation of specific business processes in mind are generally not designed to accommodate other interoperability requirements. Making these types of applications share data at some later point results in a jungle of convoluted integration architectures held together mostly through point-to-point patchwork or requiring the introduction of large middleware layers.

Figure 5 – A vendor diverse enterprise can introduce a variety of integration challenges, as expressed by the little lightning bolts that highlight points of concern when trying to bridge proprietary environments.